NEW FROM IMAGINE5

Support us and get our magazine for free

Become a member of Imagine5 or donate what you can. By supporting us you assure we are able to remain advertising free, unbiased and able to bring all our stories and events to everyone around the world. As a member you will receive our latest magazine.

IT’S HAPPENING

Why the climate will soon be starring in your favourite TV show

Audio available

Gardeners unite: Why wild seed stewards are growing a movement

Audio available

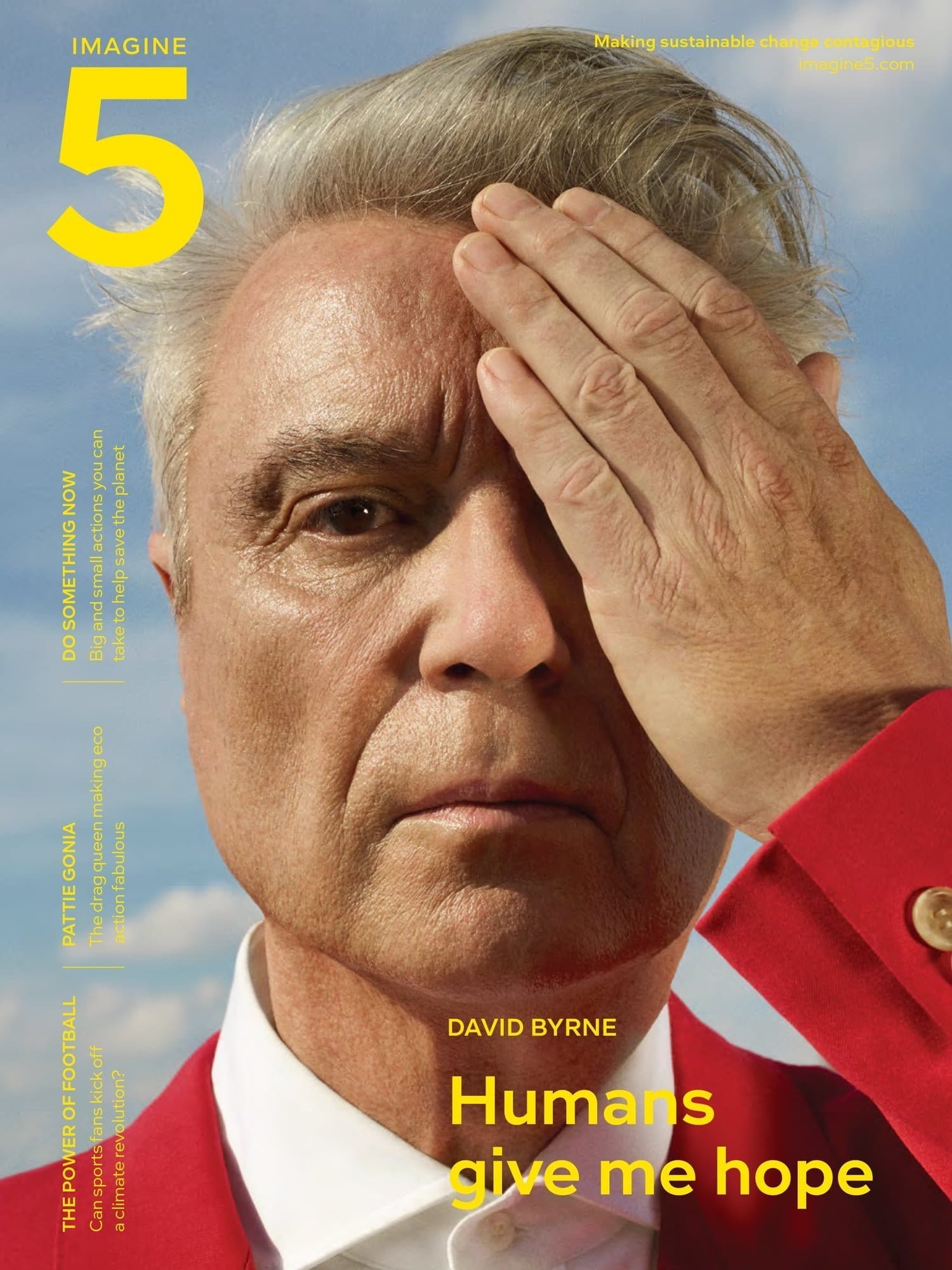

Get the Imagine5 magazine

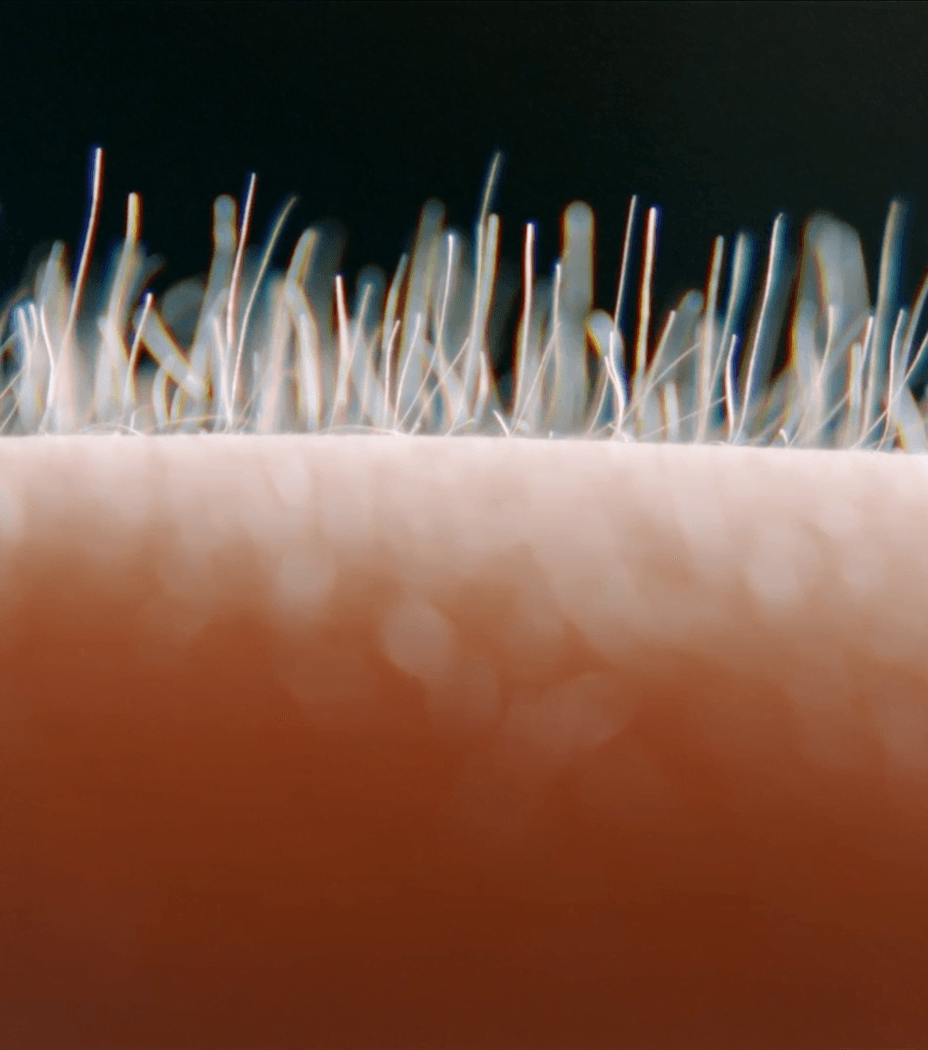

180 pages of stories to move you, people to inspire you, images to knock you sideways, and tips to try out for yourself. A distillation of our best work, designed for sharing. And the paper smells really good when you flick through the pages.

€25 plus shipping

ALL MAGAZINES